True AI Literacy: Helping Students Build Better Mental Models of AI

May 14, 2026

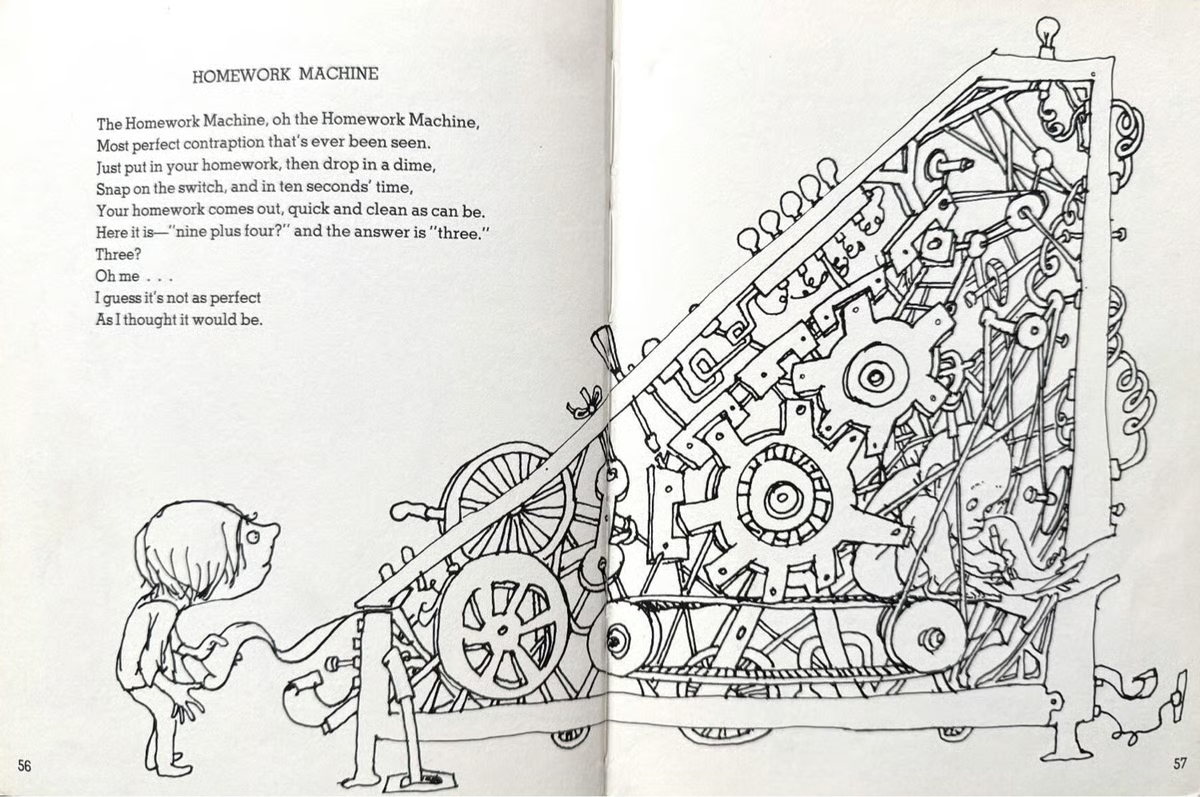

A nostalgic reminder that people have long imagined mysterious machines producing answers for us. (Hat tip to University of Maryland professor Julie Park for reminding us of this Shel Silverstein poem!)

Beyond Using AI, Understanding It

Students are already using AI for everything, everywhere, all at once. They use it to summarize readings, write essays, complete projects, and in some cases, even learn.

But there’s a growing gap between using AI and understanding it. As learning scientists involved in teaching data science, Alice Xu (UCLA) and I have become increasingly interested in a more fundamental question about AI literacy: Do students actually have a useful mental model of how AI works?

A lot of the explanations we hear boil down to “it’s magic” or “it thinks like a human.” Both are intuitive and, unfortunately, both make it difficult to anticipate AI’s mistakes, biases, and strange failures.

This feels important because many conversations about “AI literacy” focus almost entirely on whether students know how to use AI tools (trust me, they figure it out!). But using AI and understanding AI are not the same thing. We worry that many people assume the second automatically follows from the first.

At CourseKata, we think AI literacy should also include some kind of causal mental model, a way of reasoning about what produces AI behavior in the first place.

Why this Matters for Education

We have dedicated our research career to helping students build useful mental (and statistical) models of complicated systems.

In statistics and data science education, students often begin by memorizing procedures without understanding the underlying mechanisms that produce the data or the pattern of variation. But real learning happens when students develop stripped down, wrong-but-useful models that help them reason about these data generating processes.

Most people will never learn transformer architectures, gradient descent, or reinforcement learning algorithms in technical detail. But they do need ways of reasoning about:

- why AI systems can appear incredibly capable,

- why they sometimes fail in bizarre ways,

- why they can optimize the wrong thing,

- and why fluent, confident output does not necessarily imply understanding.

What We Came Up With

So we’ve been looking for a better metaphor.

Here’s what we propose: the “button-pushing explorer.”

The idea draws on classic reinforcement learning research in which early neural networks learned to play Atari games through a simple loop: act → observe → adjust

Over time, these systems could produce surprisingly sophisticated behavior without understanding the world in the way humans do.

We explore this idea more fully in a new article published this week in The Conversation US, Button‑pushing explorers: How to grasp that AI agents can do amazing things while knowing nothing

We’d also highly recommend this wonderful collection of AI metaphors and mental models: http://metaphorsofai.org/

What are the metaphors you prompt by? Let us know (via LinkedIn, Facebook, X) what mental models have helped you make sense of AI!